· RNITS · Cybersecurity Service · 11 min read

Deepfake CEO Fraud: How Small Businesses Can Spot and Stop AI Scams

AI deepfakes aren't just a celebrity problem anymore. Here's how fake CEO voices and video calls are hitting small businesses — and what to do about it.

A finance manager in a 40-person manufacturing company in the Midwest gets a video call from the CEO. The CEO is traveling, looks and sounds exactly right, and says he needs a wire transfer processed for a time-sensitive acquisition. The finance manager sends $243,000 to the account provided. The CEO never made that call. The entire video was generated by AI.

That happened in 2025. And it was not an isolated case.

Deepfake fraud losses are projected to hit $40 billion by 2027, and small businesses are catching a disproportionate share of the damage. The technology that used to require a Hollywood-level budget now runs on a laptop. An attacker needs about three seconds of someone’s voice — pulled from a conference recording, a YouTube video, an earnings call, or even a voicemail greeting — to clone it convincingly enough to fool colleagues and family members.

If you run a small business in New Hampshire or Massachusetts, this is not a hypothetical risk. It is a current one.

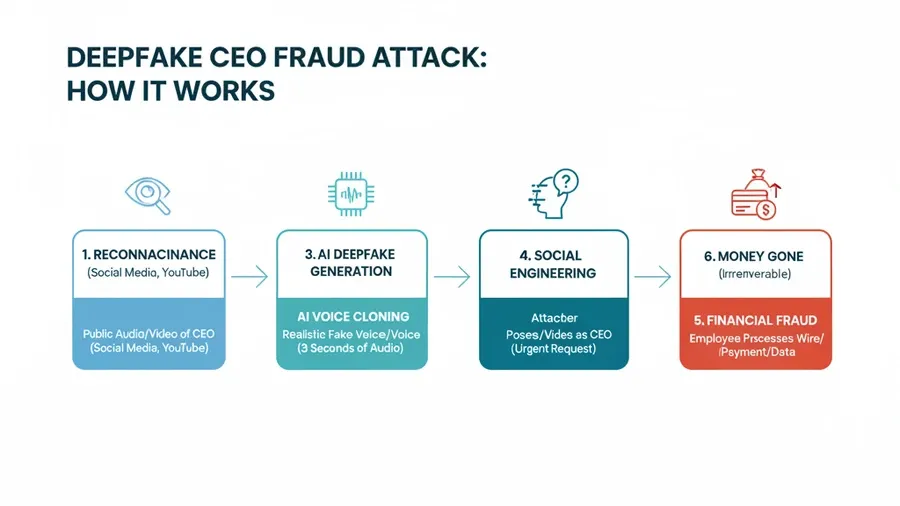

How deepfake scams actually work

The word “deepfake” sounds like science fiction, but the mechanics are not complicated. AI models analyze audio or video of a target person — their voice, facial movements, mannerisms — and generate new content that mimics them. This works in real time or as pre-recorded media.

There are three main attack vectors hitting small businesses right now.

Fake CEO voice calls

This is the most common variant. An attacker clones the voice of a business owner or executive using publicly available audio — a podcast appearance, a company video, a conference panel, or even a phone greeting. Then they call an employee who handles finances and make an urgent request.

The calls follow a predictable pattern:

- Urgency. “I need this done before end of day.” The pressure is deliberate — it discourages the employee from stopping to verify.

- Secrecy. “Don’t loop anyone else in yet, this is confidential.” This isolates the target from colleagues who might question the request.

- Authority. The voice sounds exactly like the boss. That alone overrides most people’s instincts to double-check.

A law firm in Boston nearly lost $180,000 to this exact playbook in late 2025. The managing partner’s voice was cloned from a webinar recording. The call went to a paralegal who handled vendor payments. The only reason it failed was that the paralegal’s computer was being updated and she could not process the transfer immediately — by the time she tried, the real partner had returned to the office.

Deepfake video calls

This is where it gets worse. Real-time deepfake video now runs on consumer-grade hardware and open-source software. An attacker joins a Zoom, Teams, or Google Meet call looking and sounding like someone you know.

In February 2024, a finance worker at a multinational firm was tricked into transferring $25 million after a video call where every other participant — including the CFO — was a deepfake. The employee initially suspected phishing when he received the meeting invite, but the video call “confirmed” the request because the people looked and sounded real.

Small businesses are actually more vulnerable to this than large enterprises. A company with 15 employees knows what the owner looks and sounds like. If the owner appears on a video call asking for something, there is no security operations center analyzing the call for anomalies. There is just a person who trusts their eyes and ears.

Manipulated documents and communications

Beyond voice and video, attackers use AI to generate convincing email threads, invoices, and even signed documents that look like they came from people you trust. Pair a fake invoice with a cloned voice call to “confirm” it, and you have a multi-channel attack that is very hard to catch.

An attacker might send a fake invoice from a vendor you actually use, then follow up with a cloned voice call saying “hey, just wanted to make sure you got that invoice — the bank details changed because we switched providers.” Each piece reinforces the other. The email looks right. The voice sounds right. The story makes sense. Without a structured verification process, most people will process the payment.

Why traditional security does not catch this

Most cybersecurity tools catch technical intrusions — malware, unauthorized network access, phishing links, suspicious logins. Deepfake attacks skip past all of it because they exploit human trust, not technical vulnerabilities.

Your email filter will not flag a phone call. Your endpoint detection will not flag a Zoom meeting where someone’s face is being synthesized in real time. Your firewall has nothing to inspect. The “attack surface” is the relationship between two people, and the weapon is a convincing impersonation.

Cybersecurity awareness training helps, but most training programs still focus on email phishing, suspicious links, and password hygiene. Very few cover deepfake voice or video attacks, and even fewer teach employees specific detection techniques.

This gap matters. The technology is moving faster than the training materials.

How to spot a deepfake — practical detection tips

Perfect detection is not realistic — the technology improves every month, and today’s tells might not work six months from now. But right now, there are signs worth watching for.

Audio deepfake red flags

- Unnatural breathing patterns. Real speech includes breaths, pauses, and filler sounds. Cloned voices often sound unnaturally smooth or have breathing that does not match the speech rhythm.

- Flat emotional range. Voice clones struggle with genuine emotional shifts. If the caller sounds oddly flat during what should be a stressful request, that is a flag.

- Audio artifacts. Listen for brief glitches, robotic undertones, or moments where the voice seems to “skip.” These are processing artifacts that current models have not fully eliminated.

- Inconsistent background noise. The ambient sound might shift abruptly or feel artificially added.

- Wrong cadence. If you know the person well, their speaking rhythm — how fast they talk, where they pause, how they start sentences — is distinctive. Clones approximate this but often get the micro-timing wrong.

Video deepfake red flags

- Edge artifacts around the face. Look at the boundary between the face and the background or hairline. Deepfakes often show slight blurring, flickering, or unnatural transitions at these edges.

- Eye contact that is too perfect. Real people on video calls look away, blink irregularly, and shift focus. Deepfakes tend to maintain unnaturally steady eye contact.

- Lighting mismatches. The lighting on the face may not match the lighting in the rest of the frame — shadows falling the wrong direction, skin tone that does not match the environment.

- Mouth sync issues. During fast speech or unusual words, the lip movements may lag or not match the audio precisely.

- Static accessories. Glasses, earrings, or hair may not move naturally with head movements. Some models struggle with reflections on glasses in particular.

None of these are foolproof — a well-funded attacker can fix many of them. But in the attacks we are actually seeing against small businesses, these artifacts show up more often than not. Real-time deepfakes are especially rough around the edges because the processing has to happen on the fly.

Building defenses that actually work

Spotting deepfakes is one layer. But the strongest defense is process — making it structurally hard for one convincing impersonation to turn into a financial loss.

1. Establish out-of-band verification for all financial requests

Any request involving money — wire transfers, payment changes, new vendor setups, payroll modifications — should require verification through a separate channel from the one the request came in on.

If someone calls asking for a transfer, verify by text or in person. If someone emails, call them back on a number you already have on file — not one provided in the email. If someone requests on a video call, confirm through a separate Slack message, text, or phone call after the meeting ends.

This single policy stops the majority of deepfake fraud attempts, because the attacker can only control one channel at a time.

2. Create a code word system for high-value requests

Some businesses we work with have implemented a rotating code word that changes weekly or monthly. Any financial request above a certain threshold requires the code word. If the caller cannot provide it, the request goes through a manual verification process regardless of who they appear to be.

This is low-tech and effective. An attacker who clones a voice has no way to know a code word that was shared in person or through a secure internal channel.

3. Implement dual authorization for transfers

No single person should be able to authorize a wire transfer or payment above a defined threshold. Require two people to independently approve the transaction, each verifying through separate channels.

This means even if an attacker successfully convinces one person, the second approver creates another checkpoint. The cost of the process is a few extra minutes per transaction. The cost of skipping it can be six figures.

4. Train specifically on deepfake scenarios

Generic security awareness training does not cover this well enough. Run tabletop exercises specifically focused on deepfake attacks:

- Play audio deepfake examples and ask your team to identify them

- Walk through the scenario of receiving a video call from the CEO with an urgent financial request

- Practice the verification process so it becomes automatic, not something people have to think about under pressure

We run these exercises for our managed detection and response clients, and the difference after even one session is significant. People who have heard a deepfake example are far more likely to pause and verify than people who have only read about the concept.

5. Lock down your executive team’s public audio and video

This is not always practical, but consider how much of your leadership team’s voice and likeness is publicly available. Conference recordings, podcast appearances, YouTube videos, and even long voicemail greetings all provide raw material for voice cloning.

You do not need to disappear from the internet. But be deliberate about what stays public. If there is a recording of your CEO speaking for 20 minutes at a conference three years ago that nobody watches, take it down. Every minute of clean audio makes cloning easier.

6. Use AI-powered meeting security tools

Several tools now offer real-time deepfake detection during video calls. These analyze facial movements, audio patterns, and network metadata to flag potential synthetic media. The technology is early and not perfect, but it adds a detection layer that did not exist two years ago.

If your business runs on video calls — and most do — this is worth evaluating as part of your email security and communications security stack.

The regulatory picture is catching up — slowly

Federal and state governments are starting to catch up. Several states now have laws criminalizing deepfake fraud, and the FTC expanded its guidance on AI-generated impersonation. Massachusetts has a bill pending that would create specific penalties for deepfake-enabled financial fraud.

For businesses subject to compliance frameworks like HIPAA, SOC 2, or CMMC, the expectations around identity verification and access controls are tightening. If your compliance audit asks how you verify identity for sensitive requests and your answer is “we recognize their voice on the phone,” that is going to be a finding.

Getting ahead of this now — with documented verification procedures and employee training records — puts you in a much stronger position when the regulatory landscape firms up.

What to do if you think you have been targeted

If you suspect a deepfake attack — whether it succeeded or was caught in time:

- Stop the transaction immediately. If money has been sent, contact your bank. Wire recalls are time-sensitive — hours matter.

- Preserve the evidence. Save any recordings, emails, call logs, or chat messages related to the incident. Do not delete anything.

- Report it. File a report with the FBI’s Internet Crime Complaint Center (IC3) and your local law enforcement. Even if you caught it before any damage, the report helps track patterns and may prevent attacks on other businesses.

- Notify your team. If one person was targeted, others may be next. Alert your organization immediately and reinforce the verification procedures.

- Review and tighten your processes. Every attempted attack is an opportunity to find gaps. What allowed the attacker to get as far as they did? What process change would have stopped them earlier?

The bottom line

Deepfake technology is not going backward. The tools keep getting cheaper and more convincing. Right now, an attacker with $50 in cloud compute and three seconds of your voice can generate a phone call that would fool your spouse.

The good news is that the defenses are not complicated. Out-of-band verification, dual authorization, code words, and targeted training do not require a massive security budget or a dedicated SOC. They require process discipline and awareness that this threat is real and current — not something that only happens to large corporations in news articles.

If you are not sure where your business stands on deepfake readiness, start with an assessment. We offer a free cybersecurity audit that evaluates your current verification procedures, employee training, and technical controls against the threats that are actually targeting small businesses right now — including AI-powered impersonation.

Need help protecting your business from AI-powered fraud? Call us at (978) 226-8931 or request your free cybersecurity audit — we’ll assess your current defenses and build a plan that fits your budget and your risk.